There are an increasing number of cases where gestures are adopted as the UI (User Interface) of embedded devices so that the devices can be operated "without touching". The development hurdles for adopting this gesture UI are not low. It often happens that it does not recognize the expected movement of the hand or the detection is not good with a hand other than the person who developed it.

QE for Capacitive Touch V.3.0 Development Assistance Tool for Capacitive Touch Sensors, which was updated in February 2022, can be used for developing high-precision gesture software using AI (deep learning) in just about 30 minutes.

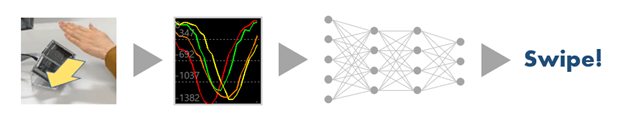

Gesture software created by the QE tool utilizes deep learning technology. By letting AI learn the value of capacitance that changes by bringing your hand close to multiple electrodes (sensors), you will be able to distinguish gestures such as “Swipe” and “Tap”. The trained AI model is easily converted into a program that can run on the actual machine easily, achieving safe and high-speed detection.

From here, I will explain the creation and operation of the gestures and the recognition accuracy of completed gestures while using the tool we developed.

Usage example of e-AI x 3D gesture recognition:

- Mechanism

- Gesture Design

- Development of gestures < Part 1 - Creation of AI learning data >

- Development of gestures < Part 2 - AI learning, C-source integration >

- Development of gestures < Part 3 - Monitoring >

- Recognition accuracy of the completed gestures

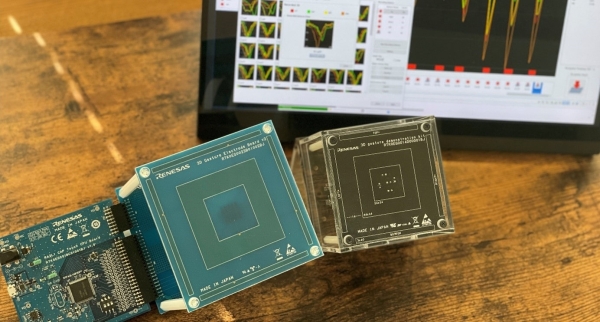

QE for Capacitive Touch is an extended development tool of the e² studio integrated development environment from Renesas. The 3D gesture recognition feature is realized in combination with the touch-free user interface by using Renesas' capacitive touch key solution and the e-AI solution.

For more details and downloading the software, see the following product information pages.