Overview

Description

Artificial Intelligence (AI) used in deep neural networks is already providing new value for the IT segment. Although many people expect to implement embedded applications with AI, AI processing requires many calculations, making it difficult to adapt to embedded devices using traditional solutions with CPU or GPU, due to insufficient performance or large power consumption demands. Also, AI is constantly evolving, and new algorithms are developed from time to time.

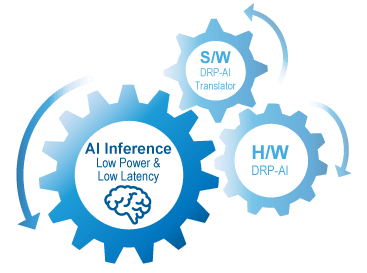

In the midst of the rapid evolution of AI, Renesas developed the AI accelerator (DRP-AI) and the software (DRP-AI translator) that delivers both high performance and low power consumption, and have the ability to respond to evolution. Combining the DRP-AI and the DRP-AI translator makes AI inference possible with high power efficiency, which the current AI technology is unable to support.

The AI model can be extended with the continuous update of the DRP-AI translator.

Features of DRP-AI

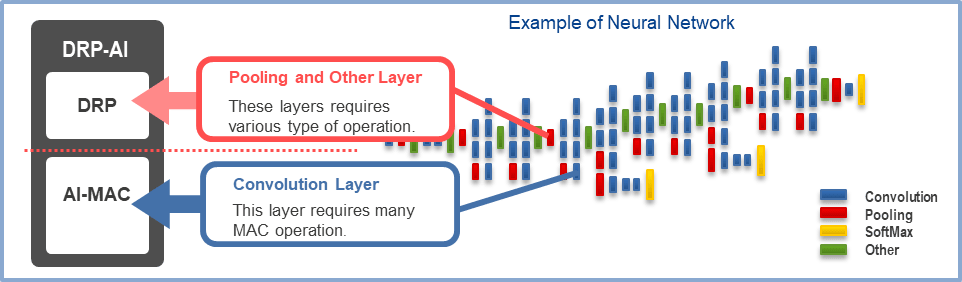

DRP-AI consists of AI-MAC (multiply-accumulate processor) and DRP (reconfigurable processor). AI processing can be executed at high speed by assigning AI-MAC for operations on the convolution layer and fully connected layer, and DRP for other complex processing such as preprocessing and pooling layer.

For more detailed technical information on DRP-AI, please refer to the following white paper.

White Paper: Embedded AI-Accelerator DRP-AI (PDF | English, 日本語)

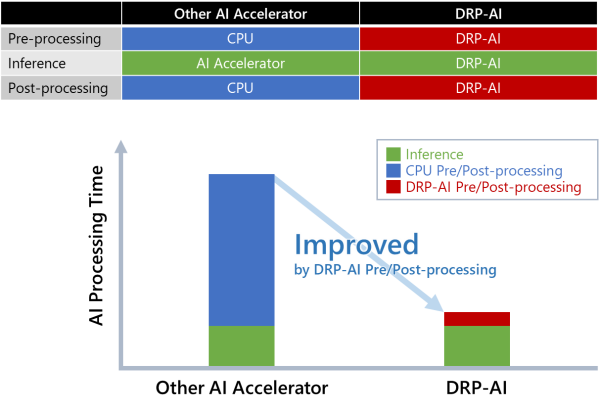

While most AI accelerators specialize only in AI inference and rely on the CPU for pre- and post-processing, DRP-AI integrates pre- and post-processing and AI inference into a single DRP-AI hardware to achieve superior AI processing performance.

Software List

Tool: DRP-AI TVM*1

DRP-AI TVM is a tool that is available to convert trained AI models into a format that can run on DRP-AI. DRP-AI TVM applies the DRP-AI accelerator to the proven ML compiler framework Apache TVM*2. This enables support for multiple AI frameworks (ONNX, PyTorch, TensorFlow, etc.). In addition, it enables operation in conjunction with the CPU, allowing more AI models to be run.

The AI model formats and products (MPUs) supported by DRP-AI TVM are as follows.

- Input file format (trained AI model): ONNX, PyTorch, TensorFlow

- Products: RZ/V2M, RZ/V2MA, RZ/V2L, RZ/V2H, RZ/V2N

The table below shows the download site for DRP-AI TVM, a brief description of the tool and information on deliverables.

| RZ/V2M, RZ/V2MA | RZ/V2L | RZ/V2H, RZ/V2N | |

|---|---|---|---|

| Tool summary description | DRP-AI TVM explanation page (Renesas website) | ||

Tool detailed explanation

| DRP-AI TVM (GitHub) | DRP-AI TVM explanatory page (GitHub.IO) | |

| DRP-AI drivers | Included in the DRP-AI Support Package | Available in AI SDK | |

| Linux | Available in Linux Package | ||

Software: DRP-AI Support Package

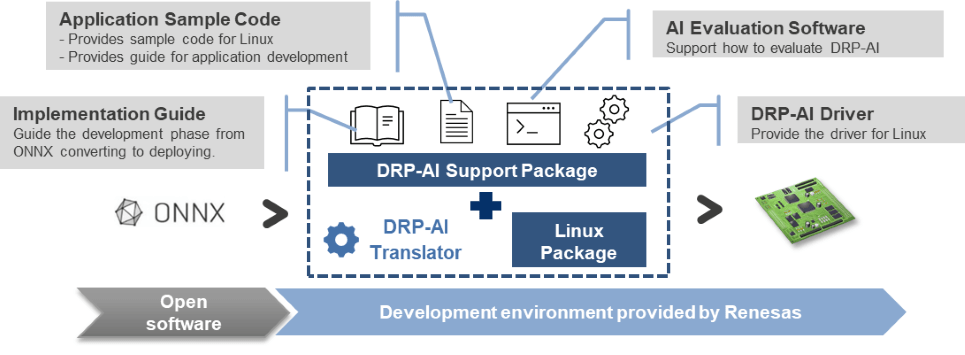

The DRP-AI Support Package provides the driver and guide needed to operate DRP-AI. Download the DRP-AI now to experience the seamless AI development from open software to device implementation.

*1. DRP-AI TVM is powered by EdgeCortix MERATM Compiler Framework

*2. For more information on Apache TVM, please refer to https://tvm.apache.org

| RZ/V2M DRP-AI Support Package [V7.51] This product provides the software and documentation for DRP-AI embedded within RZ/V2M. | Software Package | Renesas |

| RZ/V2MA DRP-AI Support Package [V7.50] This product provides the software and documentation for DRP-AI embedded within RZ/V2MA. | Software Package | Renesas |

| DRP-AI Translator [V1.90] This is an AI model conversion tool (DRP-AI Translator) for DRP-AI equipped products. This product is also used as an internal tool for DRP-AI TVM (required when installing DRP-AI TVM). | Software Package | Renesas |

| DRP-AI TVM (GitHub) We provide an AI model conversion tool (DRP-AI TVM) for DRP-AI-equipped products. When using this product, please check the contents of the linked README.md first. | Software Package | Renesas |

| DRP-AI Translator i8 This is an AI model conversion tool (DRP-AI Translator i8) for DRP-AI equipped products (INT8 architecture). This product is also used as an internal tool for DRP-AI TVM (required when installing DRP-AI TVM). | Software Package | Renesas |

5 items | ||

Design & Development

Videos & Training

DRP-AI Accelerator embedded in RZ/V series MPUs provides high-speed AI processing while keeping high power efficiency at the endpoints.

News & Blog Posts

Blog Post Apr 5, 2024 |

Blog Post Apr 18, 2022 |

Blog Post Feb 16, 2022 |

Blog Post Nov 15, 2021 |

Support

Support Communities