AIを搭載した機器がドリフトしている信号に基づいて動作すると、その機器は自分が誤った動作をしているとは認識できません。 単に、自信満々に正確な動作をしますが、向かう方向が間違っているのです。 正確さを伴わない自信は知性ではありません。 それはリスクです。

システムに与えられた指示内容と、物理世界で実際に起きていることとの間に生じるズレを埋められるのはたった一つしかありません。それはシステムを下支えするアナログ基盤であり、この基盤はあらゆる場所に存在しています。

人工知能(AI)は、今や工場や自動車、ロボット、衛星、データセンターなどあらゆる物理システムに組み込まれ、物理世界全体の動作を支えるレイヤーとなっています。 AI技術はあらゆる分野に浸透しましたが、どの知能システムの根底にも、それを可能にする基盤が存在しています。 その基盤はアナログです。

業界がAIモデルやパラメータ、コンピュータ・アーキテクチャについての議論に熱中している間にも、信号レベルでは静かだがそれ以上に重要な動きが進行しています。 ソフトウェアがどれほど高度であっても、あらゆる自律システムは最終的に現実世界を感知し、それに反応してその中で動作しなければなりません。 物理世界とデジタル世界をつなぐインターフェースはアナログであり、システムが高度化するにつれて、そのインターフェースは単に拡大するだけでなく、倍増していきます。

「シグナルチェーン」とは、センサからアナログフロントエンド、変換、同期、電源、制御を経て、最終的にモデルが実際に受け取るデータに至るエンドツーエンドの経路を指します。

もはやAIがエッジで動作するかどうかは問題ではありません。 問題は、その下にあるアナログ基盤が十分に深く構築されているかどうかであり、それによってAIシステムは単に信頼できるだけでなく、高速かつ高精度に動作し、アプリケーションが要求する最高レベルの性能を発揮できるようになるのです。

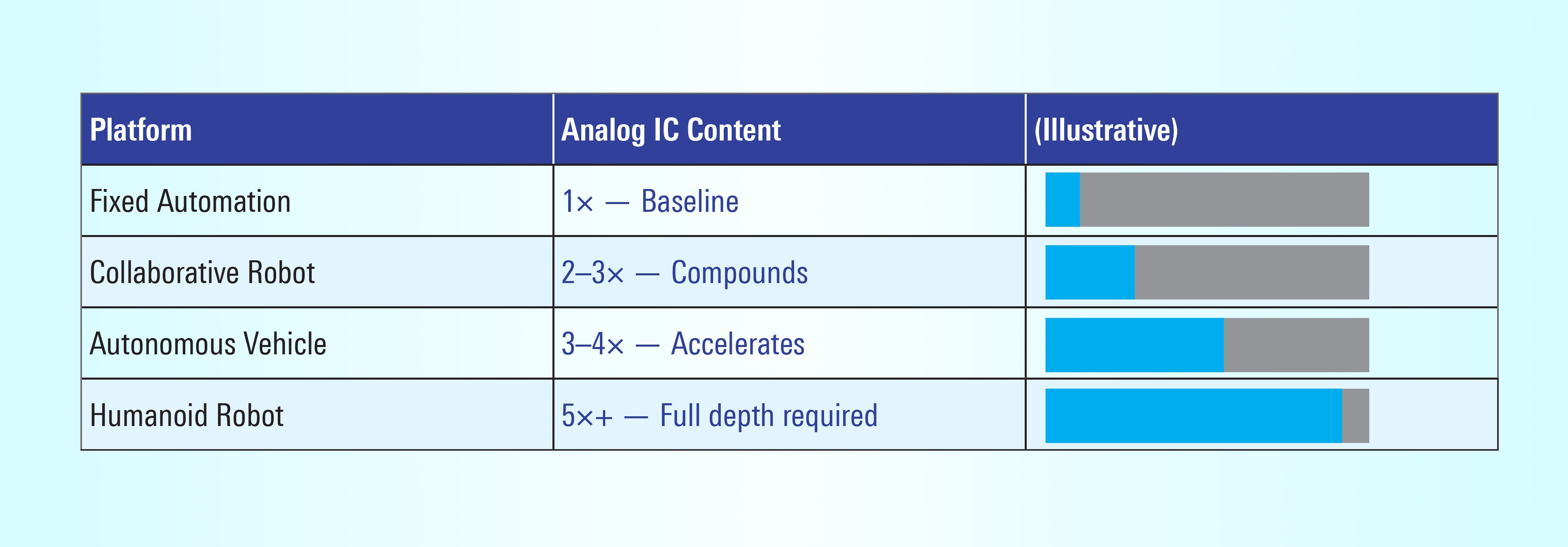

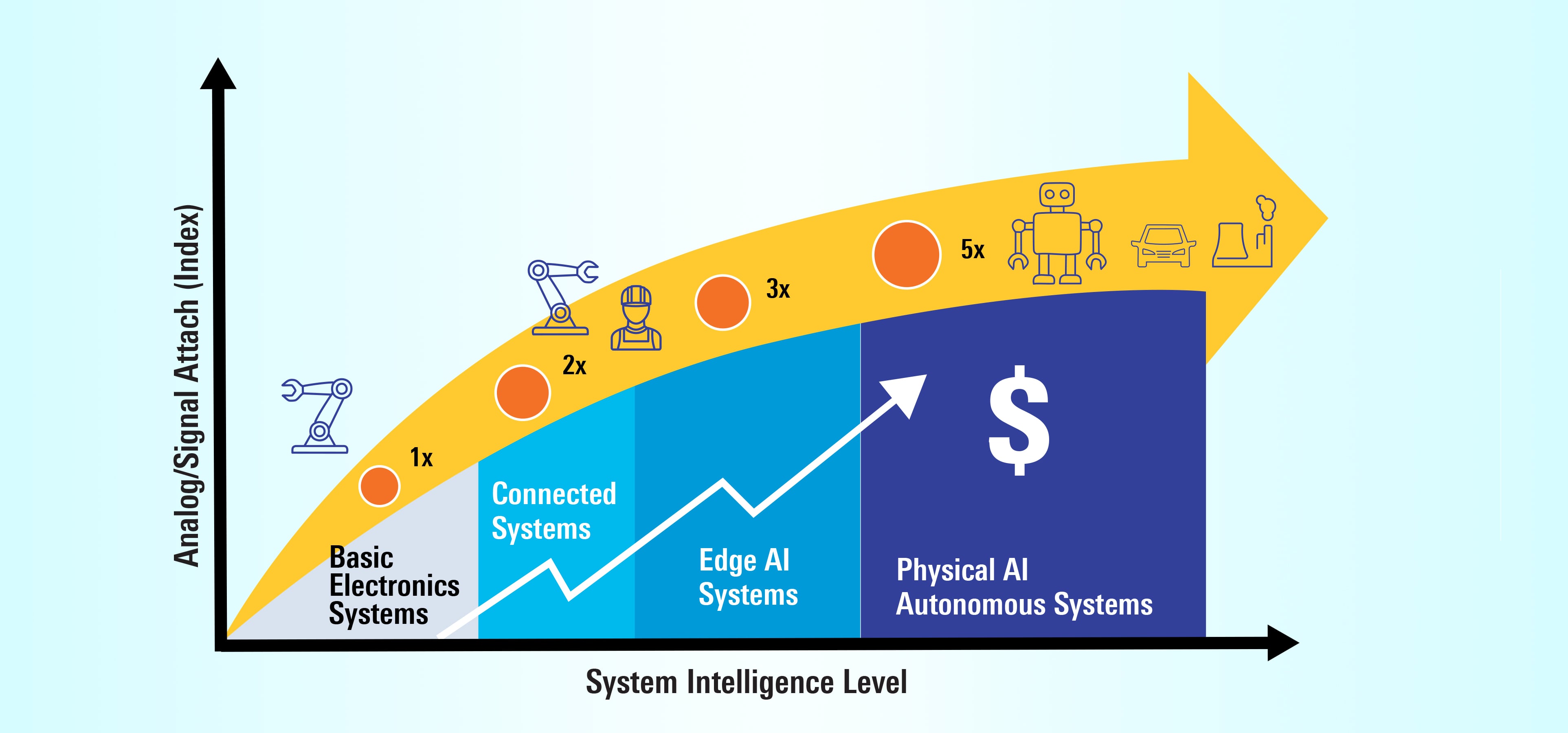

アナログ付加曲線

それが実際にどういうことかを理解するには、物理AIが試作段階から量産段階へ移行するたびに現れるパターンを定義しておくと役立ちます

物理AIが浸透しつつあるあらゆる領域で繰り返し現れるパターンがあります。 機械がより高性能になり、自律性と精度が向上し、安全性の重要度が増すにつれて、それらを動かすために必要なアナログ回路およびミックスドシグナル回路の量は、線形に増えるのではなく、指数関数的に増加します。

注: グラフ中の倍率は曲線の形状を示すための参考値です。

図1. アナログIC搭載量 ∝ 自律性

図1に示すとおり、自律性が高まるにつれてアナログICの搭載量は指数関数的に増加します。センサインタフェース、データコンバータ、モータ制御チャネル、電源レールが増え、安全監視もより厳格になります。

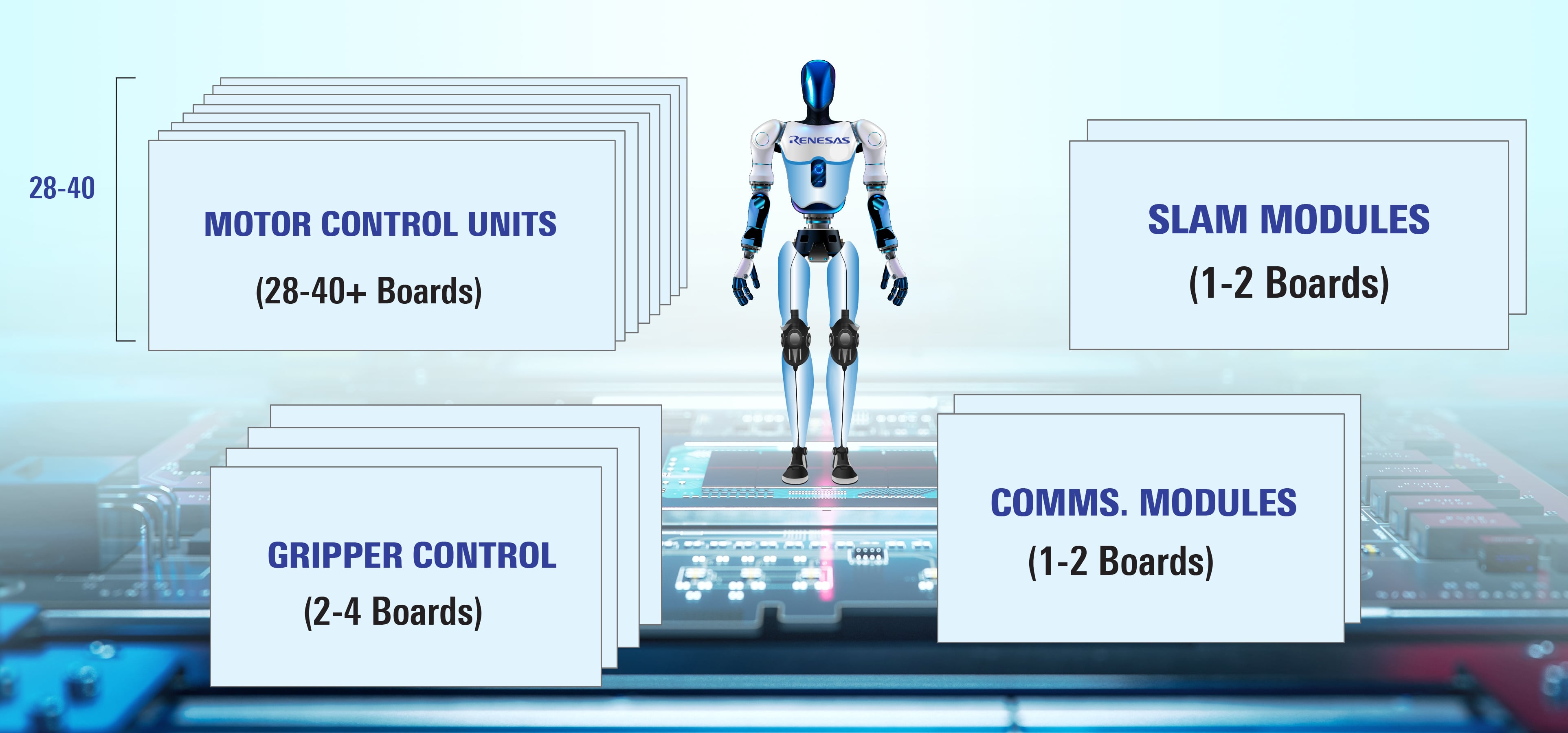

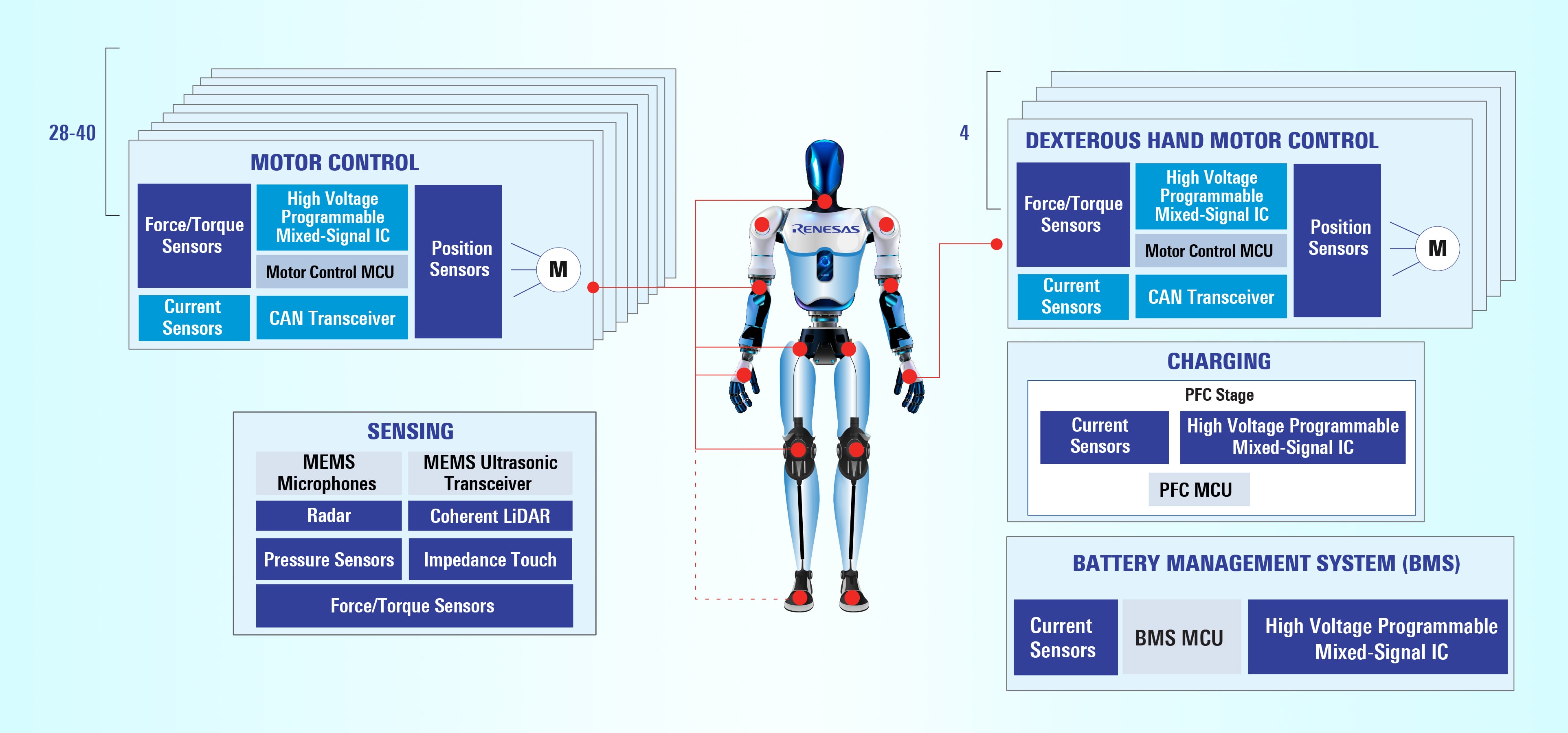

人型ロボットは、この曲線が示す関係の最も顕著な例です。 あらゆるジェスチャー、一歩一歩の動作、あらゆる判断は、最終的に200以上のアナログICに行き着きます。これらのアナログICは、各関節におけるモータ制御、位置検知(エンコーダ/レゾルバ)、トルクおよび力のフィードバック、電源管理、さらにLiDAR、視覚、触覚、圧力、インピーダンスを含む知覚レイヤにまで及んでいます。 指先では数ミリメートルや数ミリ秒のわずかな差がつかみ動作の成否を左右するため、同じアーキテクチャが指先でも再び適用されています。 知能が高まります。 知能の高まりに伴って、シグナルチェーンもより深くなり、各層では単に信頼できる程度の信号ではなく、十分に正確で高速かつ安定した信号が求められます。

図2. 人型ロボット各関節のモータ制御用IC搭載数

図3. 人型ロボットプラットフォームにおけるシグナルチェーンの構造

ヒューマノイドのシグナルチェーンは各関節で繰り返されます。各関節には、モータードライブと電流検出、位置フィードバック(エンコーダー/レゾルバー)、トルク/力フィードバック、ローカル電源管理、高信頼性通信などが含まれ、安定した把持のため指先の圧力・インピーダンス検出まで拡張されています。 アナログはAIにおける単なる下位技術ではありません。 アナログがあるからこそAIは物理世界で動作できるのです。 そしてルネサスは、端から端までこのアナログ基盤を提供する体制を整えています。

なぜ縦方向の深みを欠いた水平展開は破綻を招くのか

産業の現場は電気的に苛酷な環境です。 温度の激しい変動、振動、電磁ノイズ、長いケーブル配線は、ソフトウェアでは後から補正できない形で信号品質を劣化させます。 例えば、熱ストレスで0.5度のドリフトが生じてもエラーフラグを出さない位置センサは、AIシステムが何の疑いもなく正しいと信じ込んでしまう誤った出力を生み出します。

これがラボで動作するAIと現場で動作するAIとの間にあるギャップです。

このギャップを埋めるには部品を寄せ集めるだけでは不十分です。センサ、制御、電源、通信が一つのシステムとして機能するように設計されたエンドツーエンドの信号アーキテクチャが必要です。

現実世界においては、精度は単一の仕様ではなくシステム全体の特性です。 精度はキャリブレーションやタイミング、電源インテグリティ、故障検知を意識した制御といった要素の中にこそ宿ります。

これからの10年

勝利を収めるのは、最大のモデルを持つシステムではないでしょう。 むしろ、温度、振動、遅延、ノイズ、消費電力の制約、安全性の制約、長いライフサイクルといった実際の使用環境下で、最も信頼性の高い性能を安定して発揮できるかどうかです。

それはもはやソフトウェアの問題ではありません。 シグナルチェーンの問題なのです。 もし実世界で動作するAIシステムを構築するのであれば、アナログ技術を設計の主軸として扱うべきです。開発の初期段階からアナログを設計に織り込み、明確に予算を割り当て、センシング、制御、電源、通信を、単なる部品の寄せ集めではなく一つのシステムとして設計する必要があります。

重要なポイント

- 自律性が高まるにつれて、アナログおよびミックスドシグナルの複雑さが飛躍的に増大します— もはやモデルの大きさではなく、システムの性能・精度・信頼性が決定的な要素となります。

- 実世界の現場では、ドリフトや電磁干渉(EMI)、振動、ケーブル損失がシステムを破綻させます— 上流の信号インテグリティのエラーは、AIにとって「自信満々」で下された誤判断となって現れます。

- 成功するアーキテクチャは、センシング、制御、電源、通信を一体となったシグナルシステムとして扱います— これらは設計の初期から組み込まれ、明確に予算化され、実世界での利用を前提に構築されます。

AIはあらゆる分野に広がっていきます。 勝者は深く踏み込みます。 その競争はすでに始まっています。

関連情報

詳細については、renesas.com/analogをご覧ください。