In the new age of data center design, artificial intelligence exerts an outsized influence on how power is generated, distributed, and used to render real-time, data-driven outcomes. Traditional cloud data centers were optimized around relatively predictable CPU workloads. Modern AI data centers are dominated by accelerator-heavy systems that behave very differently. These demands are driving a fundamental rethinking of data center power architectures, accelerating the adoption of gallium nitride (GaN) semiconductors.

Framing the AI Data Center Power Challenge

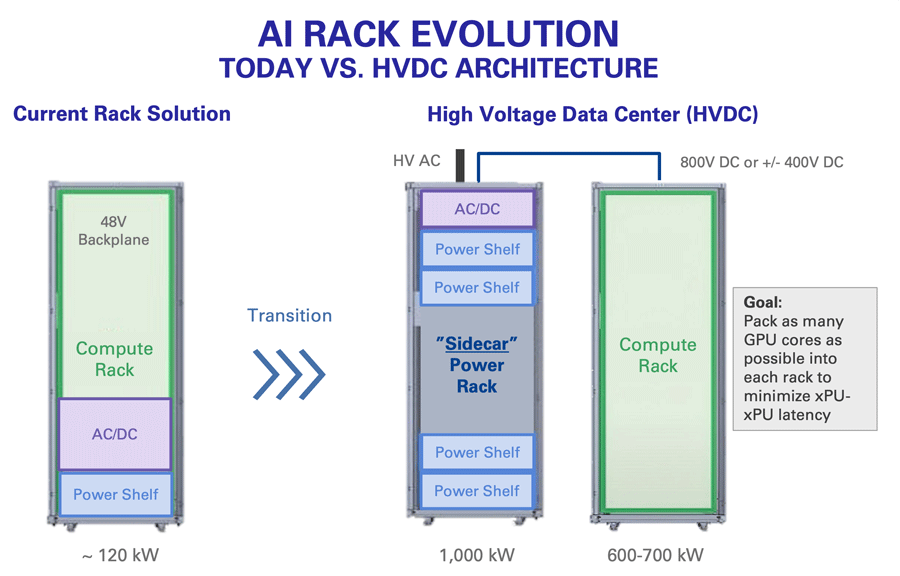

What distinguishes AI data centers from earlier generations of cloud infrastructure is higher power use compounded by a sharp increase in how dynamic that power has become. A server rack in a conventional data center might top out at 30 kilowatts. Today's AI systems run at roughly 50 to 300 kilowatts per rack (depending on the supplier) and are on pace to eclipse one megawatt already by next year. When rack power scales that quickly, power distribution and power conversion can no longer be managed in the background.

For AI adoption to scale sustainably, power architectures must become more efficient, compact, and adaptable. In short, what was once an operational expense has become a complex, capital-intensive consideration that is likely to persist as long as larger AI models continue to feed the appetite for compute.

AI Drives New Power Architectures

One of the clearest signs of this transformation is the move away from traditional 415V AC distribution toward 800V DC (or ±400V) DC architectures. These higher voltages reduce current, minimize conduction losses, and improve system efficiency. They also change the demands placed on power conversion stages and the devices that enable them.

Dig deep into a server rack, and the AI accelerator has emerged as a prime mover in the race to reimagine data center power. These compute engines aren't just chips anymore; they're large, distributed systems. A single AI pod, or "super cluster," can house as many as 9,000 accelerators, 4,500 CPUs, a vast network of optical interconnects, and a supporting cast of power management, pumps, and liquid cooling infrastructure. That's one instance, and a hyperscale data center can deploy hundreds of instances per year.

Fewer Conversions, Higher Voltages

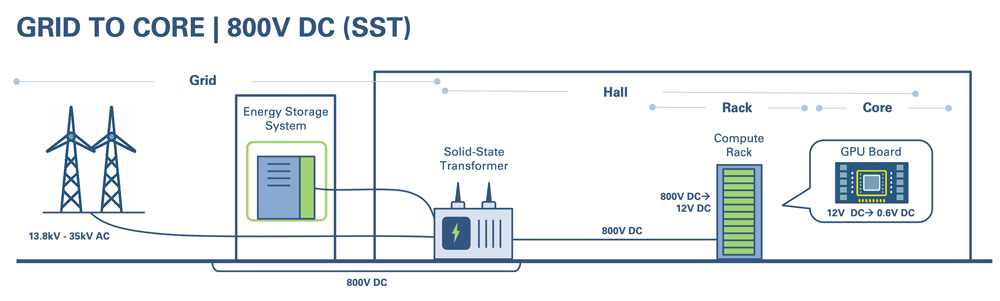

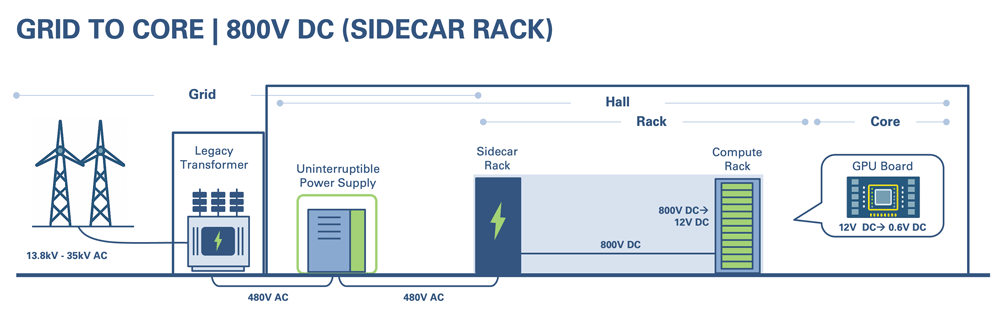

As the pressure builds, data center operators are reconsidering how power moves from the grid to silicon. One major shift is toward higher-voltage DC distribution, including 800V DC (or ±400V) DC and architectures, with some industry discussions extending to 1,200V or 1,600V DC in the longer term.

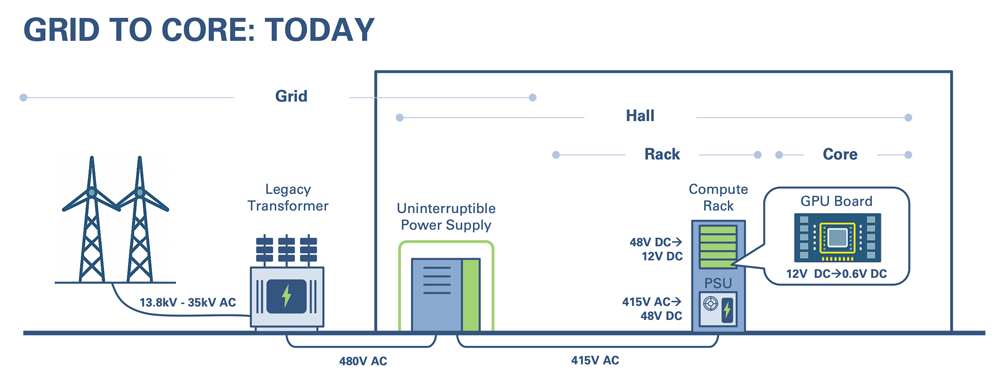

The motivation is intuitive. Every intermediate power conversion stage adds loss. In a traditional topology, power may enter as AC (480V 3-phase), convert to DC for battery charging, convert back to AC (415V) for distribution, and then convert again to DC (48V) for rack and board-level use. Reducing the number of conversion steps improves end-to-end efficiency and increases the share of grid power that actually reaches compute.

These changes are reshaping the role of power semiconductors. At high voltage levels, for example, the industry is moving to replace oil-based line transformers with solid-state alternatives. That favors new power architectures that can switch efficiently at higher frequencies while managing higher voltages.

At the same time, the rapid increase in rack power is driving AC/DC conversion out of the server chassis and into dedicated sidecar racks. Today, a significant portion of the available rack space is consumed by AC-to-DC conversion, converting 415V AC to 48V DC for distribution. With rack space at a premium, power conversion hardware now occupies expensive real estate. Centralizing these stages in a dedicated cabinet allows designers to open new headroom for AI compute while managing heat more effectively.

Why GaN and Why Now?

GaN adoption has quickened because AI data centers amplify two core advantages: high-voltage capability in a compact form factor combined with fast, efficient switching.

GaN devices can withstand higher voltages across smaller distances than silicon. GaN's high electron mobility and saturation velocity enable switching frequencies into the megahertz range, while silicon typically operates below that. GaN also offers lower intrinsic parasitic capacitance than silicon, enabling faster switching with reduced losses.

In system terms, switching frequency inversely correlates to the size of magnetics and passive components. If designers can increase switching frequency, they can shrink magnetics and reduce the footprint of power conversion stages. The consumer electronics world has already demonstrated this trend with miniature fast chargers. AI infrastructure is now pulling the same lever, but at kilowatt and megawatt scales.

The GaN Sweet Spot: 800V to 48V and Bidirectional Power

In the AI data center power chain, different voltage domains favor different materials. At multi-kilovolt levels, silicon carbide (SiC) is well suited for solid-state transformer applications. GaN's most appropriate, near-term domain in data centers is the conversion stage from around 800V down to intermediate voltages like 48V and, in some cases, 12V. That 800V to 48V stage is a sweet spot where GaN provides efficiency, robust operation, and higher switching speeds than silicon.

AI data centers are also implementing bidirectional power flow in AC/DC conversion. The driver is the extreme transient behavior of large AI accelerators. When loads change quickly, energy stored in capacitive elements can either be dissipated as loss or managed intelligently. Bidirectional architectures enable energy to flow into the system during load surges and flow back out into local energy storage when surplus energy is no longer needed, similar in concept to automotive regenerative braking systems.

Bidirectional GaN devices simplify these designs. Rather than building a full bridge with four discrete MOSFETs, for example, two bidirectional GaN devices can suffice. Renesas recently announced our first bidirectional GaN switch, which replaces traditional two-stage architectures with single-stage power conversion for improved efficiency.

Renesas Defines GaN as a System Solution

AI data center innovation is moving too quickly for designers to optimize their systems component by component. Product cycles, once measured in three to four years, have compressed to 12 to 15 months. That means AI data center architects need end-to-end power solutions and comprehensive, long-term road maps, not piecemeal portfolios that shift risk onto the system designer.

Drawing on decades of power expertise, Renesas recognized that easing GaN adoption and shrinking design cycles requires a symphony of co-designed controllers, gate drivers, and protection devices together with reference designs and technical support. Our GaN solution, recently announced at the Applied Power Electronics Conference (APEC), demonstrates how this system-level approach translates into faster, lower-risk designs.

Renesas' acquisition of Transphorm and its high-voltage SuperGaN® D-mode GaN FET technology has further strengthened our position. By providing data center OEMs with a combination of device-level innovation and system-level support, we promote faster evaluation and shorter design cycles.

Adoption Barriers and the Road Ahead

Despite its advantages, GaN adoption is not without challenges. Cost, qualification requirements, and design familiarity all influence adoption rates. Over the next three to five years, the pace of GaN integration in AI data centers will depend on how effectively vendors address these concerns through education, tooling, and demonstrated reliability.

What is clear is that AI workloads will continue to push power architectures toward higher density and efficiency. For data center architects, GaN is a way to drive AI growth without unsustainable increases in energy consumption. For power semiconductor vendors used to incremental efficiency gains, it marks a fundamental shift in how power is delivered. As that happens, the trade-offs that once favored silicon will give GaN an edge. The question now is not whether GaN has a role in AI data centers, but how quickly and broadly it will be deployed.