Vision AI – or computer vision – refers to technology that allows systems to sense and interpret visual data and make autonomous decisions based on an analysis of this data. These systems typically have camera sensors for the acquisition of visual data that is provided as input activation to a neural network trained on large image datasets to recognize images. Vision AI can enable many applications like industrial machine vision for fault detection, autonomous vehicles, face recognition in security applications, image classification, object detection and tracking, medical imaging, traffic management, road condition monitoring, customer heatmap generation, and so many others.

In my previous blog, Power Your Edge AI Application with the Industry's Most Powerful Arm MCUs, I discussed some of the key performance advantages of the powerful RA8 Series MCUs with the Cortex®-M85 core and Helium that make them ideally suited for voice and vision AI applications. As discussed, the availability of higher-performance MCUs as well as thin neural network models more suited for the resource-constrained MCUs used in endpoint devices, are enabling these sorts of edge AI applications.

In this blog, I will discuss a vision AI application built on the RA8D1 graphics-enabled MCUs featuring the same Cortex-M85 core and use of Helium to accelerate the neural network. RA8D1 MCUs provide a unique combination of advanced graphics capabilities, sensor interfaces, large memory, and the powerful Cortex-M85 core with Helium for the acceleration of the vision AI neural networks, making them ideally suited for these vision AI applications.

Graphics and Vision AI Applications with RA8D1 MCUs

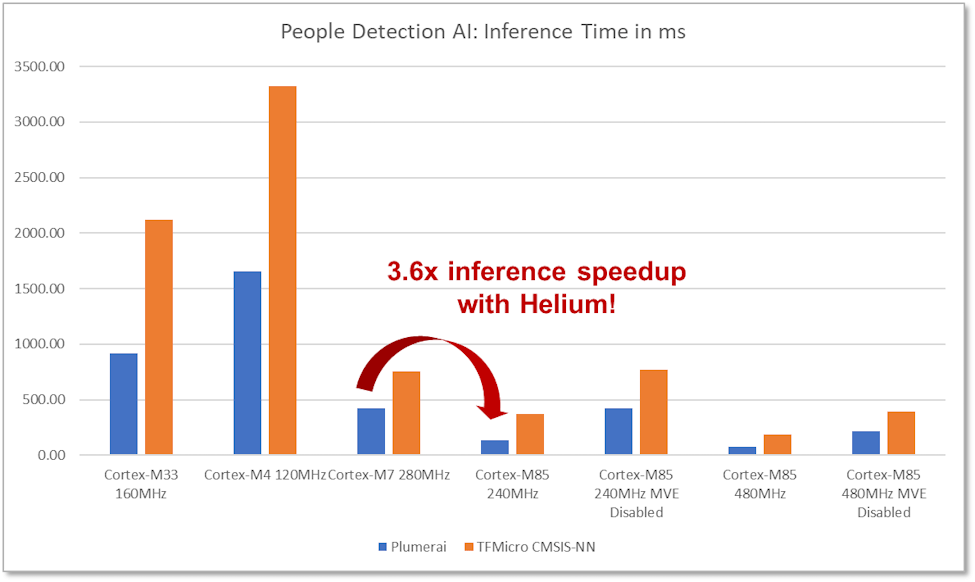

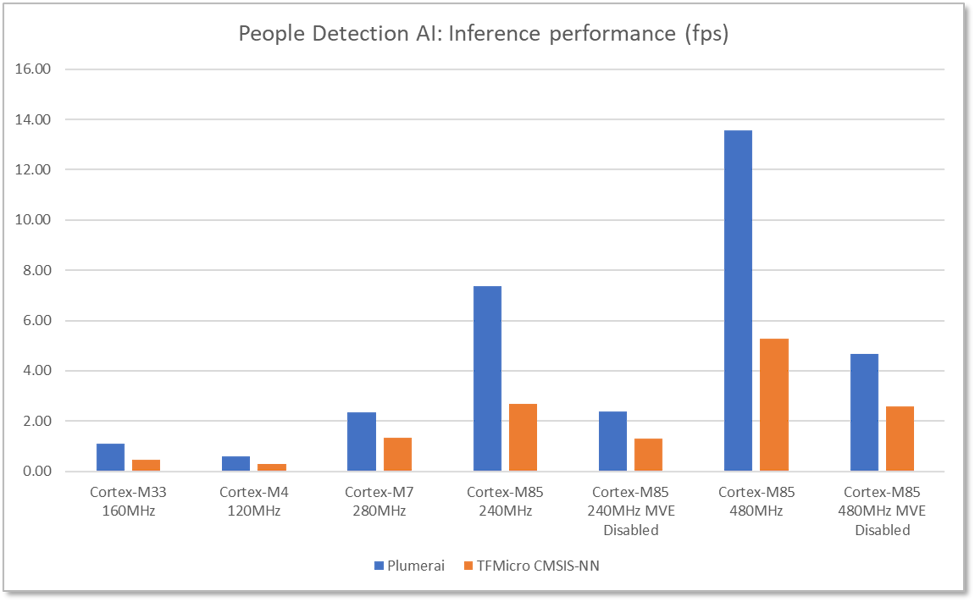

Renesas has successfully demonstrated the performance uplift with Helium, in various AI/ML use cases showing significant improvement over a Cortex-M7 MCU – more than 3.6x in some cases.

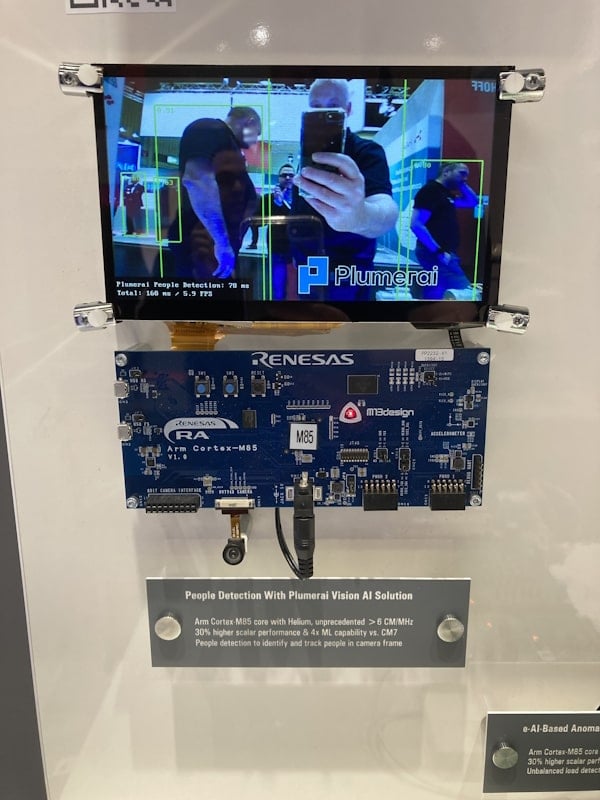

One such use case is a people detection application developed in collaboration with Plumerai, a leading provider of vision AI solutions. This camera-based AI solution has been ported and optimized for the Helium-enabled Arm® Cortex®-M85 core, successfully demonstrating both the performance as well as the graphics capabilities of the RA8D1 devices.

Accelerated with Helium, the application achieves a 3.6x performance uplift vs. Cortex-M7 core and 13.6fps frame rate, a strong performance for an MCU without hardware acceleration. The demo platform captures live images from an OV7740 image-sensor-based camera at 640x480 resolution and presents detection results on an attached 800x480 LCD. The software detects and tracks each person within the camera frame, even if partially occluded, and shows bounding boxes drawn around each detected person overlaid on the live camera display.

Plumerai people detection software uses a convolution neural network with multiple layers, trained with over 32 million labeled images. The layers that account for the majority of the total latency, are Helium accelerated, such as the Conv2D and fully connected layers, as well as depthwise convolution and transpose convolution layers.

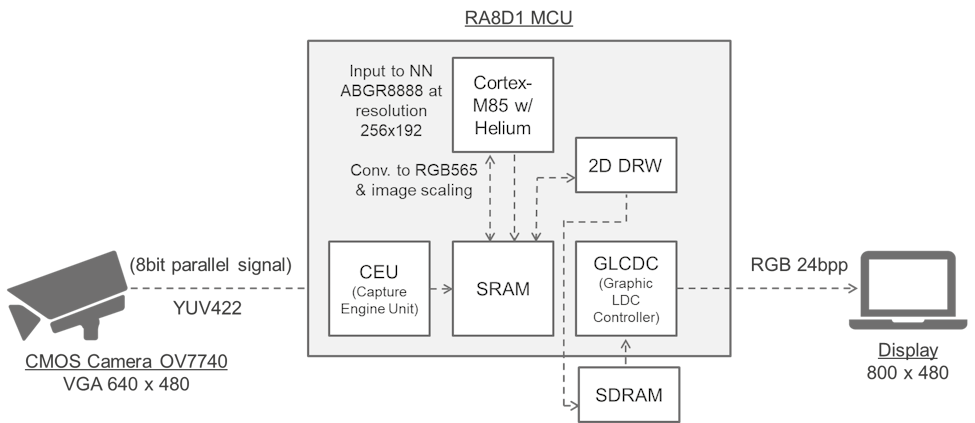

The camera module provides images in YUV422 format which is converted to RGB565 format for display on the LCD screen. The 2D graphics engine integrated on the RA8D1 resizes and converts the RGB565 to ABGR8888 at resolution 256x192 for input to the neural network. The software then converts the ARBG8888 format to the neural network model input format and runs the people detection inference function. The graphics LCD controller and 2D drawing engine on the RA8D1 are used to render the camera input to the LCD screen as well as draw bounding boxes around detected people and present the frame rate. The people detection software uses roughly 1.2MB of flash and 320KB of SRAM, including the memory for the 256x192 ABGR8888 input image.

Benchmarking was done to compare the latency of Plumerai’s people detection solution as well as the same neural network running with TensorFlow Lite Micro (TF Micro) using Arm’s CMSIS-NN kernels. Additionally, for the Cortex-M85, the performance of both solutions with Helium (MVE) disabled was also benchmarked. This benchmark data shows pure inference performance and does not include latency for the graphics functions, such as image format conversions.

This application makes optimal use of all the resources available on the RA8D1:

- High-performance 480MHz processor

- Helium for neural network acceleration

- Large flash and SRAM for storage of model weights and input activations

- Camera interface for capture of input images/video

- Display interface to show the people detection results

Renesas has also demonstrated multi-modal voice and vision AI solutions based on the RA8D1 devices that integrate visual wake words and face detection and recognition with speaker identification. RA8D1 MCUs with Helium can significantly improve neural network performance without the need for any additional hardware acceleration, thus providing a low-cost, low-power option for implementing AI and machine learning use cases.

References and Resources

- RA8D1 product page for technical documentation and samples

- EK-RA8D1 Evaluation Kit and example projects

- Plumerai people detection demo on Renesas RA8D1 MCUs

- Plumerai people detection solution