e-AI Solution

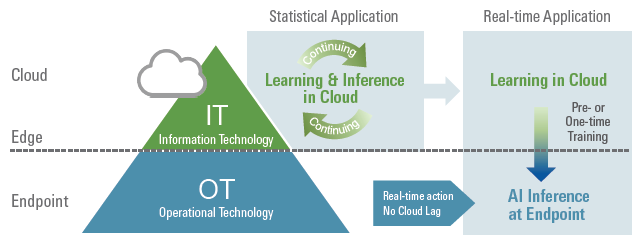

Renesas' embedded artificial intelligence (e-AI) solution enables artificial intelligence (AI) technology on embedded systems. AI consists of training and inference. Our e-AI solution enables the use of AI by running inference on MCUs and MPUs.

Learn More: What is Renesas' e-AI Solution?

Advantage of e-AI

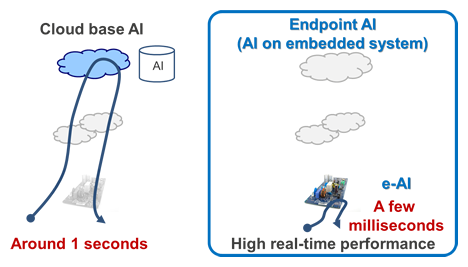

e-AI's biggest advantage is real-time processing. e-AI can make judgments or respond without network delay, therefore getting inference results faster than using the cloud. e-AI can be used where continuous input data is judged one after another via AI with real-time performance.

Renesas will continue to develop environmentally friendly solutions for a smart society that supports safer and healthier lifestyles through innovations from endpoint intelligence.

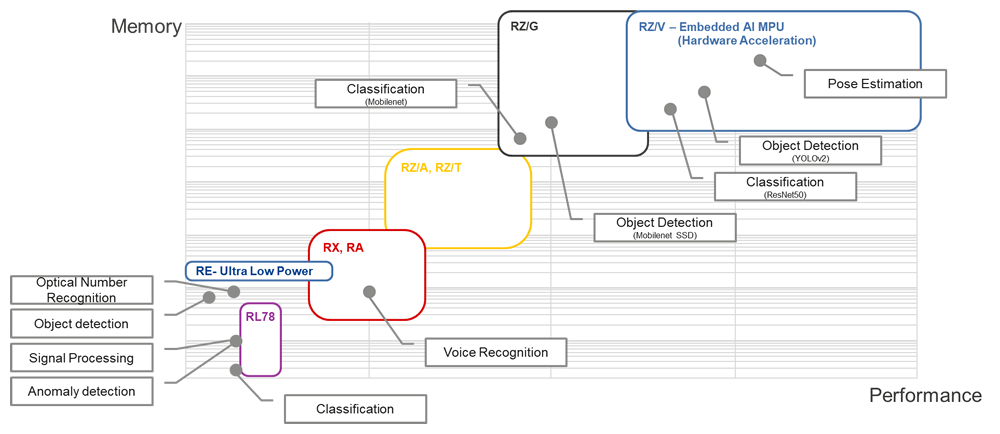

Optimal MCU/MPU Choices by AI Application Type

There are many AI application examples, and the requirements for memory size and performance are different. Refer to the following figure to choose which MCU/MPU is best for your AI application.

Refer to the actual application example by videos or partner solutions.

e-AI Development Environment

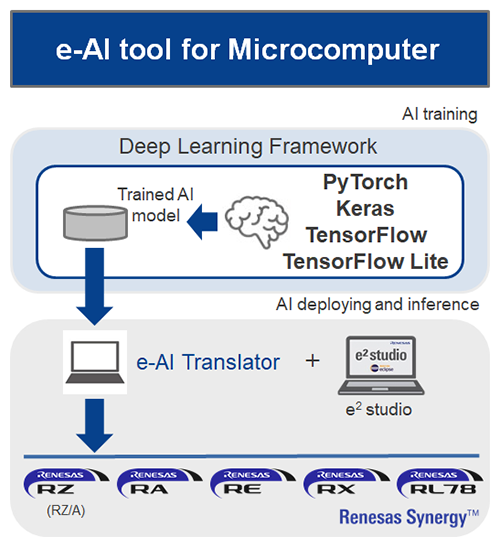

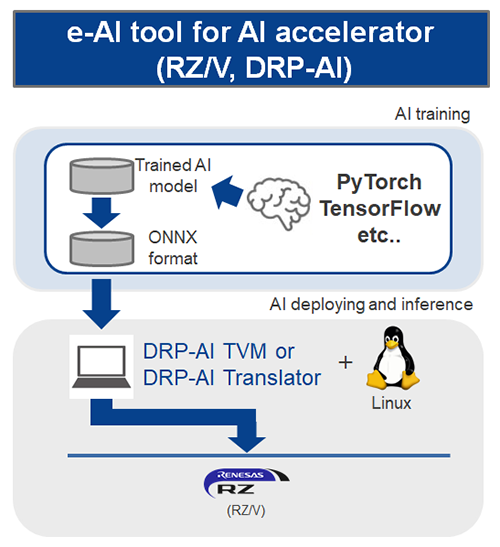

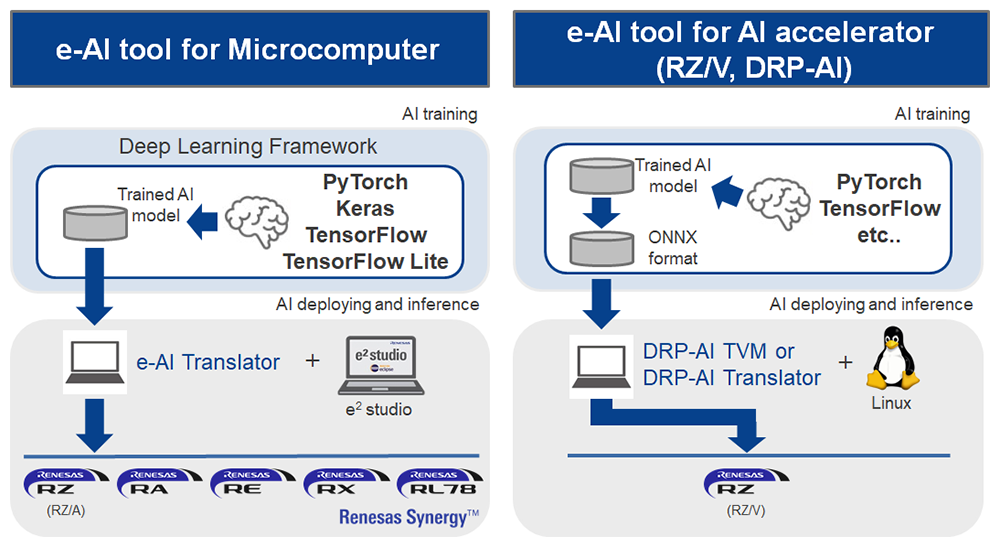

Renesas provides the e-AI development environment for easier AI implementation with MCUs or AI accelerators. When a user inputs the trained AI model into this tool, the tool converts to a program that runs on the MCU or AI accelerator without any additional operations.

Where to Download e-AI Development Environments

e-AI Development Environment for Microcomputers

This tool supports a variety of Renesas microcomputers. The tool converts trained models of "PyTorch", "Keras", "Tensorflow", or 8-bit quantized models of "TensorFlow Lite" and imports them easily to e² studio, Renesas' integrated development environment. These Renesas products are suitable for use in relatively small-scale AI for endpoints.

[New]

e-AI Translator V3.1 is available. (Dec 2023)

- Support TensorFlow Lite APIs for real time analysis (Conv1D and so on)

- Support API “Group Convolution” for accelerating AI calculation

The tutorial guides of e-AI Translator are added/updated. (Sep. 2023)

- RA6M5 e-AI Translator usage example is added.

- RX72N e-AI Translator usage example is updated.

e-AI Development Environment for AI Accelerator

This tool supports the RZ/V series equipped with the AI accelerator DRP-AI. This tool can convert ONNX format that is trained by frameworks such as "PyTorch" to DRP-AI object code. The RZ/V products are suitable to be used for relatively mid-scale AI for endpoints or edge computing.

Supported Products

These development environments support the following products:

| e-AI Translator | |

|---|---|

| RA Family | Ecosystem Partners |

| RZ/A Series | Ecosystem Partners |

| RL78 Family | Ecosystem Partners |

| RX Family | Ecosystem Partners |

| Renesas Synergy™ Platform | Ecosystem Partners |

| DRP-AI TVM / DRP-AI Translator | |

|---|---|

| RZ/V series | Ecosystem Partners |

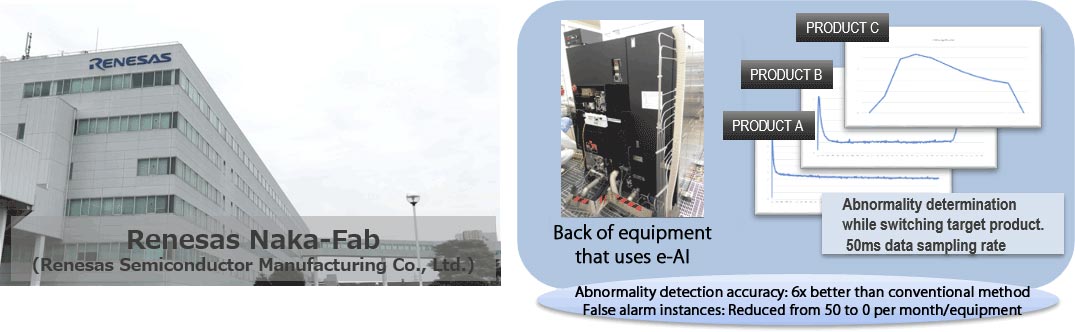

Our Experiment by Renesas Naka Fab

A demonstration experiment on equipment abnormality detection and predictive maintenance by e-AI was conducted (2015) at the semiconductor front-end factory -"Renesas Naka factory (Renesas Semiconductor Manufacturing Co., Ltd.)"- in Hitachinaka City, Ibaraki Prefecture. New added value, such as abnormality detection and predictive maintenance, can be achieved without making major remodeling to existing facilities while utilizing the existing facilities. In addition, e-AI has greatly improved detection precision with regard to facility abnormalities that previously could only be properly judged by a skilled technician or operator. We have received inquiries from more than 40 customers from Japan and overseas, and started discussions with more than 10 AI-related partners for the concretization of business. This example convinced customers that e-AI could contribute to their business and could solve social problems. At the Naka factory, preparations are now underway for the practical use of e-AI for abnormality detection and predictive maintenance.

Plug-ins for e² studio for connecting AI and embedded systems are available.